DislikedThanks FXEZ for putting up this thread and thanks guys for your valuable contributions. Very interesting stuff here that really helps seperate the wheat from the chaff. :-)Ignored

Quantitative Approach to Backtesting 24 replies

applications using quantitative data on the NYSE 0 replies

FXEZ's E/J Gravy Train 26 replies

DislikedAlthough I have zero experience with R (or things like matlab) and relatively modest experience with other languages (mostly mql, c and some web, html..) over the years I've managed to make quite a few experiments regarding algo trading (and trading prob.stats. as a whole). So, I am able to share some of my experiences and insights. I have no intention to post my code here, only my theoretical conclusions and philosophical insights based on my research. If the OP considers the following for off topic - feel free to delete my post! ------------------------------------------------------------------------------------------------...Ignored

Disliked{quote} FXEZ, Great post Though I do have to point out that random number algo in R or any other algo-based RN generators are pseudo-random and assumptions made base on pseudo random could deviate from their intended purposes. A solution to this problem is to use hardware random number generators. http://ubld.it/products/truerng-hard...ber-generator/ https://en.wikipedia.org/wiki/Hardwa...mber_generator Carry onIgnored

DislikedThe R code then imported the data and cleaned it. It calculated the approximate dollar value for a 1 pip move. The analysis is then based off of this. ** FXEZ (or anyone else) -- do you think this is the best way to do this? The other way is to do the linear regression analysis on raw prices ... but the problem is that some currency pairs are orders magnitude different (think JPY crosses: xxx.xx and EURUSD: x.xxxx). This makes the coefficient very small or very large. I have thought of doing % change from previous bar also ... but want to get the...Ignored

QuoteDislikedNow that we have the data frame 'x' of all prices (data massaged and scaled as described above), we normally would do a linear regression of each pair on each other to calculated the coefficients and then calculate the spread. The problem is if we use linear regression of x on y, we get coefficient c. Now if we do a linear regression of y on x, we might not get coefficient 1/c.

Disliked{quote} One can use correlation coefficient which does not depend on absolute values of tested variables. I am also not sure that prices are normally distributed.Ignored

Disliked{quote} Thanks for your post Tardigrade, I'm glad that you're here. You make an excellent point about random number generators and their limitations. Have you used hardware based random number generators? I wonder how easily these hardward RNGs integrate with programming environments like R or Python and how they are in terms of speed vs software algorithms?Ignored

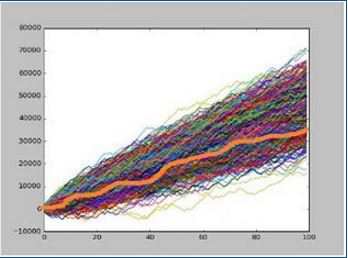

DislikedWhat you don't realize(at first), is the fact that ALL of these curves are generated by system with NEGATIVE(small, but negative) mathematical expectancy per trade! This means that eventually over time ALL of these curves will go to zero! However, if you look at all these positive curves ( the "winners") and study each one of them on individual basis, you will find that they all have positive expectancy per trade (based on their history). Despite the fact that they are generated by system with negative expectancy! What this means is that, LUCK can...Ignored

QuoteDislikedOn a long enough time line, the survival rate for everyone drops to zero

DislikedCan the MM strategy be found that is the inverse of the % of equity sizing such that instead of a negative drag we get a positive boost to the account?Ignored

(1+x)(1-x) >= 1 1-x^2 >= 1 -x^2 >= 0

(1+x)(1-y) >= 1 1-y >= 1/(1+x) -y >= 1/(1+x) - 1 y <= 1 - 1/(1+x)

(1-x)(1+z) >= 1 1+z >= 1/(1-x) z >= 1/(1-x) - 1

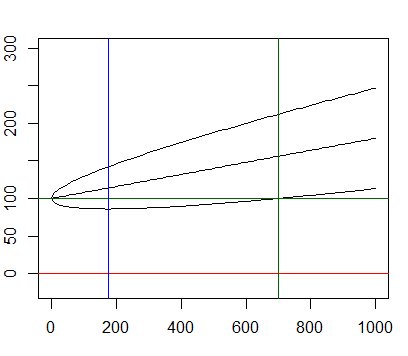

# Setup the graphics if (dev.cur() == 1) x11() par(mar=c(3,2,2,1)) b <- 100 # starting balance p <- 0.54 # probability of winning R <- 1 # RRR E <- p*(R+1)-1 # Expectancy v <- p*(1-p)*(R^2+1) # Variance low <- ((3*v) / (2*E))^2 / v # lowest point (3 standard deviations confidence) pos <- (9*v)/E^2 # (3 standard deviations confidence) curve((b+E*x), from=0, to=1000, col='black', ylim=c(-20, 300)) curve((b+E*x+3*sqrt(v*x)), from=0, to=1000, col='black', add=T) curve((b+E*x-3*sqrt(v*x)), from=0, to=1000, col='black', add=T) abline(h=b, col='darkgreen') abline(h=0, col='red') abline(v=low, col='blue') abline(v=pos, col='darkgreen')

DislikedI have drawn similar conclusions regarding the degree of luck that is present in any equity curve. Alpha appears to be a very small bias in an otherwise random outcome that over the Law of Large Numbers results in positive expectancy, but it is impossible to predict when the non-random features of price action unfold. You need to be very careful in your assessment of the performance metrics of your system in discerning between actual performance results and results attributed to simple chance. As FXEZ has clearly demonstrated, there is considerable...Ignored

QuoteDislikedGiven this feature, it is useful when assessing the performance metrics of any single trading system to plot where the historic equity curve of a return series fits in a Monte Carlo sequence (where the trade sequence is re-ordered).

QuoteDislikedSimply making conclusions on the historic equity curve alone in assessing your performance metrics can lead you into a serious trap. The orange equity curve has all the characteristics of a system with a high positive expectancy, a low drawdown, high annual sharpe and a good return result......however.....now for the fine print....... how much of this equity curve performance metrics can be attributed to simply the trade sequence?

QuoteDislikedThe orange equity curve plots at the upper end of the population series indicative of the fact that a large portion of the result can simply be attributed to the favourable nature of this particular trade sequence. The upper placement of the historic plot in the entire population of series suggests that the equity curve is dominated by simple random good luck with a favourable trade sequence outcome as opposed to a result that typifies the performance metrics of the system.

QuoteDislikedSo the moral of the story is that you should never assume that the historic equity curve of your trading system is a suitable proxy for the future unless you closely consider the impact that the sequence of trade results may have in biasing historic performance results.

Disliked{quote} You search x such that this is greater than or equal to one (boost or at least no drag). (1+x)(1-x) >= 1Ignored

QuoteDisliked1-x^2 >= 1 -x^2 >= 0 Obviously no boost is going to happen since x^2 is non negative.

QuoteDislikedThe optimal x is no trading (0%) to get no negative drag!

curve((1+x)*(1-x))

Disliked{quote} Therefore the bet size shall change from one trade to the other. But since your (non-)edge doesn't change is it sensible?Ignored

QuoteDislikedHere I distinguish the win-loss and the loss-win cases because after the first trade we can decide to change the risk.

y <= 1 - 1/(1+x) You can plot the functions x and 1 - 1/(1+x) to convince yourself that y is always less than x. After a winner you need to preserve the gain.

curve( 1 - 1/(1+x) )

QuoteDislikedFor the second case: (1-x)(1+z) >= 1 1+z >= 1/(1-x) z >= 1/(1-x) - 1 Again you can plot 1/(1-x) - 1 to convince yourself it is above x. You have to increase after a loser to recoup the loss. So the answer is YES... if you dare entering the land of Lord Martin Gale....

curve(1/(1-x) - 1)

[99] 49.00000000 99.00000000 Inf

[50] 0.96078431 1.00000000 1.04081633

Disliked{quote} It sounds like you're using sampling with replacement of the original system's trade outcomes as in a bootstrapping procedure?Ignored

Disliked{quote} Can you talk a bit about the procedure you're using to sample the additional curves or create the confidence interval? If you are sampling with replacement I think it is basically saying that you're assuming the population is your total series of trade outcomes (profit/loss) for the system trade by trade.Ignored

Disliked{quote} When you randomly sample with replacement from that population of trade outcomes you create new trade series that can have different paths and different expectancies because one curve may oversample winning trades in a series while another may oversample losing trades in a series. So the going forward the implication seems to be that even though your long-term expectancy is X, in the short term you may end up with one of the poorer performers rather than the great long-term performer even though the population contains all the trades in the orange curve. In the short term you may get much better / worse than your historic results. Am I in the ballpark?Ignored

Disliked{quote} Thanks to you mate for getting the cogs whirring in this thread :-) {quote} Yep, We are simply reordering the sequence of trade results over a 100 trade backtest for two different systems, each with a supposed positive expectancy. The point of these examples is that it is important to understand the distribution that your backtest comes from when all other variables are set constant aside from trade sequence. Furthermore the shape, concentration and dispersion of returns in a MonteCarlo sequence when compared to other examples gives you...Ignored

The risk is set at 1% by default but the actual size of the risk used in proactive will only affect the gradient of the equity curves and not the overall dynamics.

The variable reward, or payoff, is simulated by sampling from the a distribution generated from minimum, maximum and mean values supplied to the simulation function. Its assumed that the pay off is normally distributed with a skew to towards smaller than larger payoffs e.g. you won't pull large winners consistently but they do happen. It also can be considered an low-fi way to model trailing stops, targets that are set by arbitrary goals e.g. 2 times risk, and trades that get cut because they are wrong (or have demonstrated good reasons to stop).

The final assumption that the trades are independent of each does disregard trader psychology but simplifies the modelling and keeps us focused on the task at hand - exploring the impact of expectancy and risk/reward payoff on the equity curve.

Short-coming

The model doesn't stop trading if equity goes negative - this keeps the code simpler and helps to illustrate the equity curve dynamics better in the plume plot.

The modelling does NOT take into account transaction fees.

Running the script

Linux or BASH console

On Linux simply execute the run script:

$ ./run.sh

> source('C:\\Scripts\\QuantitativeResearch\\Expectancy\\expectancy.R') Equity curve plume plot

expectancy <- function(p, reward=1, risk=1, numTrades=100) {

outcomes <- runif(numTrades, min=0, max=1)

winners <- outcomes <= p

losers <- outcomes > p

structure(

(sum(winners*reward) - sum(losers*risk))/numTrades,

data=data.frame(

pr.win= p,

outcomes.pr=outcomes,

outcome=outcomes <= p,

pay.off = reward,

risk = risk

),

class='expectancyAnalysis'

)

}

expectancyMC <- function(

p, payoff.range=c(0.1, 10), payoff.mean=2, payoff.probs=NA, risk=1,

numTrades=100, numSimulations=10000

) {

payoffs <- seq(payoff.range[1], payoff.range[2], 0.1)

if (is.na(payoff.probs)) {

payoff.probs <- c(

dnorm(payoffs[payoffs < payoff.mean],payoff.mean,payoff.mean/4.0),

dnorm(payoffs[payoffs >= payoff.mean],payoff.mean,payoff.mean/2.0)

)

}

result <- sapply(

1:numSimulations,

function(x) expectancy(

p, sample(payoffs, numTrades, T, payoff.probs), risk, numTrades),

simplify=FALSE

)

attr(result, "payoffs") <- payoffs

attr(result, "payoff.probs") <- payoff.probs

result

}

expectationAnalysis <- function(

experimentName, prWin, numTrades,

payoff.range, payoff.mean,

risk=1, openingBalance=100, simulations=100000, outputPath="~/tmp",

saveEquityCurves=F, doEquityCurveAnalysis=T

) {

result <- expectancyMC(p=prWin, payoff.range=payoff.range, payoff.mean=payoff.mean, risk=risk, numTrades=numTrades, numSimulations=simulations)

# Mean expectation from the simulations

theExps <- as.numeric(result)

meanExp <- mean(theExps)

cat("Mean expectancy is: ", meanExp, "\n")

if (doEquityCurveAnalysis) {

# Calculate equity curves

# Assumption that risk express a percentage of capital e.g. risk = 1 implies each trade risk 1% etc.

equityCurves <- sapply(

result,

function(x) {

data <- attr(x, 'data')

balances <- c(openingBalance)

for (idx in 2:nrow(data)) {

if ( balances[idx-1] <= 0 ) {

newBalance <- 0

} else {

newBalance <- ifelse(data$outcome[idx-1],

balances[idx-1]*(1 + data$pay.off[idx-1]/100),

balances[idx-1]*(1 - data$risk[idx-1]/100))

}

balances <- c(balances, newBalance)

}

balances

}

)

# Plot equity curves

col.best <- rgb(0, 204/255, 51/255)

col.average <- rgb(51/255, 153/255, 1)

col.worst <-rgb(154/255, 0, 51/255)

col.plume <- rgb(0.8, 0.8, 0.8, .5)

png(

paste(outputPath, paste(experimentName, "_equitycurves.png", sep=''), sep='/'),

width=1920, height=1080

)

best <- which(equityCurves[100,] == max(equityCurves[100,]))[1]

worst <- which(equityCurves[100,] == min(equityCurves[100,]))[1]

plot(

x=c(1, numTrades), y=range(c(0, pretty(equityCurves))), type='n',

main=paste("Equity curves (Experiment: ", experimentName, ")", sep=""),

xlab='Trade', ylab='Equity ($)'

)

abline(h=openingBalance, lwd=1, col='black')

lower <- apply(equityCurves, 1, min)

upper <- apply(equityCurves, 1, max)

polygon(c(1:numTrades, numTrades:1), c(lower, rev(upper)), col=col.plume, border=NA)

lines(x=1:numTrades, y=equityCurves[, best], lwd=3, col=col.best)

lines(x=1:numTrades, y=apply(equityCurves, 1, mean), lwd=2, lty=2, col=col.average)

lines(x=1:numTrades, y=equityCurves[, worst], lwd=3, col=col.worst)

leg1 <- legend(

"topleft",

c("Best", "Worst", "Average", "Data range"),

col=c(col.best, col.worst, col.average, col.plume), bty='n', lty=c(1,1,2,1), lwd=c(2,2,2,0),

pch=c(NA,NA,NA, 15), cex=2, pt.cex=2

)

dev.off()

# Save equity curves?

if (saveEquityCurves) {

write.csv(equityCurves, paste(outputPath, paste(experimentName, "_equitycurves.csv", sep=''), sep='/'))

}

}

# Plot expectation results

sdExp <- sd(theExps)

png(

paste(outputPath, paste(experimentName, "_expectancy_hist.png", sep=''), sep='/'),

width=1920, height=1080

)

expDensity <- density(theExps)

xmax <- max(expDensity$x)

hist(theExps, prob=T, xlab=sprintf("Expectancy (p=%3.2f)",prWin),

main=sprintf("Experiment %s expectancy distribution (E=%3.3f, N=%d)", experimentName, meanExp, simulations),

pty='m', xlim = c(0, round(xmax) + sign(xmax) * 0.5)

)

abline(v=meanExp, lty=1, lwd=5, lend=1, col=rgb(1,0,0,0.5))

abline(v=meanExp-c(1:2)*sdExp, lwd=5, lend=1, col=rgb(0,1,0,0.5))

lines(expDensity, lty=2, lwd=2, col='blue')

text(

meanExp-c(1:2)*sdExp, par()$usr[4] *.8,

c(expression(-sigma), expression(-2*sigma)), adj=c(0,-.6)

)

dev.off()

# Save the expectancies

write.csv(theExps, paste(outputPath, paste(experimentName, "_expectancies.csv", sep=''), sep='/'))

# Plot a histogram of pay off probabilties

png(

paste(outputPath, paste(experimentName, "_payoff_hist.png", sep=''), sep='/'),

width=1920, height=1080

)

hist(

sample(attr(result, "payoffs"), numTrades, T, attr(result, "payoff.probs")),

prob=T, xlab = "Payoff",

main=paste("Histogram of payoff samples (N=", numTrades, ")", sep="")

)

dev.off()

}

# Close any open graphical devices (useful for script runtime errors etc).

while (! is.null(dev.list()) ) dev.off()

outputPath <- "./output"

for (prWin in seq(0.25, 0.90, 0.05)) {

cat("Pr(win) =", prWin, "\n")

result <- expectationAnalysis(

experimentName=sprintf("%dperc_variaible_payoff", as.integer(prWin*100)),

prWin=prWin, numTrades=100,

payoff.range=c(0.1,10), payoff.mean=2,

risk=1, openingBalance=100, simulations=10000, outputPath=outputPath,

saveEquityCurves=T, doEquityCurveAnalysis=T

)

}