Take this for what it's worth.

Hey Bob,

As soon as I saw the line “It expects me…” in your write-up on the Roshambot, I knew you understood the point of the exercise. I’ve seen that thing absolutely spank people who were just playing casually. Some were never convinced it wasn’t cheating (and that is a relatively weak version). Students of artificial intelligence systems have yearly competitions between bots to determine who has the best strategy. The first year of the competition, “Iocaine Powder” won easily:

“Dan Egnor has written an incredibly strong Rock-Paper-Scissors program, which simply dominated every aspect of the competition. Of the 25 independent tournaments run for the Open Competition, Iocaine Powder won ALL of them. In the six sets of 25 tournaments conducted for the "Best of the Best" competition, Iocaine Powder finished first every time.

In many ways, Dan's program is a generation ahead of its time. I believe it would have been a worthy winner of the second RoShamBo competition, after all the lessons had been learned and ideas shared from the first set of tournaments. By co-operating with his fellow alumni from Caltech, he greatly improved on a previous version of the program, which was already strong enough to win the competition!

The program name refers to a scene from the movie "The Princess Bride". The Man In Black has added iocaine powder, a deadly poison, to one of two goblets of wine. Vizzini the Sicilian must choose a goblet, then each will drink their wine. It is a battle of wits to the death, as Vizzini must deduce what level of trickery the Man In Black used in choosing the goblet to poison. If you don't know the outcome, rent the movie (it is wonderful). Vizzini begins his reasoning with:

"Now, a clever man would put the poison into his own goblet, because he would know that only a great fool would reach for what he was given. I am not a great fool, so I can clearly not choose the wine in front of you. But you must have known I was not a great fool, you would have counted on it, so I can clearly not choose the wine in front of me..."”

Egnor took a huge shortcut in design that actually gave his program more predictive power. The other programmers assumed that a competing program’s designwould reveal itself through a statistical analysis of its throws. What they failed to take into account is that the competition would be doing the same thing, so any anomaly in the data was just as much a reflection of their own algorithm as their competitors. If their program saw a trend and acted upon it, this would in turn be acted upon by the competition thereby affecting the data. Each program was oblivious to its own effect on future outcomes. Egnor realized this and simply asked himself “Rather than mine the data for anomalies, why not just make the assumption that it is trying to figure out what I’m gonna throw and then simply throw its trump’s trump?” So while the typical program was attempting to find out what how the competitor’s bot worked, Egnor’s bot simply watched for signs of the competitor’s conclusions and then trumped it. If the competitor’s bot somehow found this pattern, Egnor’s program just bumped the level of deception up a notch. The losing programs sought to discover the competition’s design from the history of throws, while Egnor’s focused on the idea that its competitor has intentions.

So how does this relate to trading? Well, most people approach the markets from a design stance. How is the market designed? What is the hidden order? But we’re going to take the intentional stance, one where we don’t care so much about what the hidden order is nearly as much as what we think others think it is. While traders are watching the market, we will be watching the traders. The market data is similar to the throw history of the two competing bots. The traders will search that history for design while we watch the trader’s conclusions about that history. That way, we can throw paper when they throw rock. We will buy when they sell.

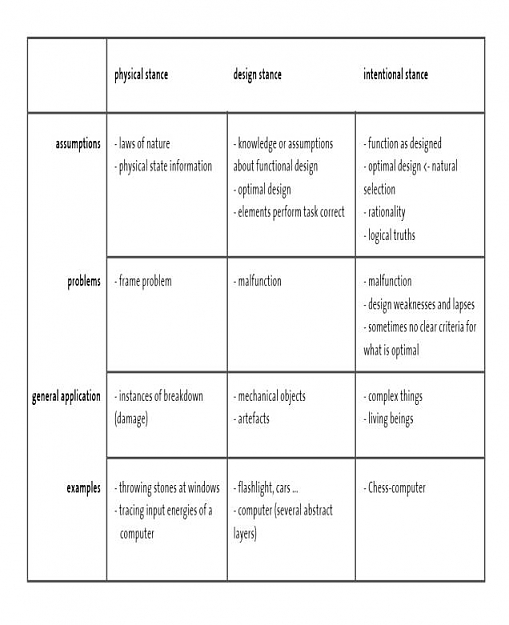

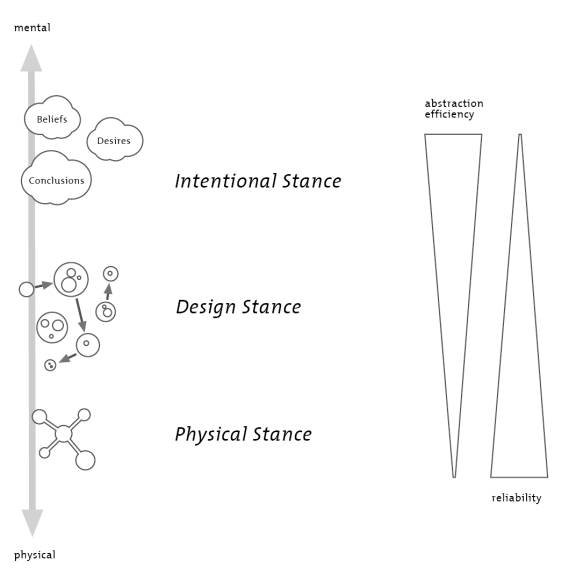

I’ve included more about the design and intentional stances below. These ideas were developed by Daniel Dennett, a cognitive scientist, philosopher, evolutionary biologist, etc. The guy is a genius. It is purely academic but I find it interesting. It is by no means compulsory. I grabbed it from a website by the way so it’s not my write up:

“According to Daniel Dennett, there are three different strategies that we might use when confronted with objects or systems: the physical stance, the design stance, and the intentional stance. Each of these strategies is predictive. We use them to predict and thereby to explain the behavior of the entity in question. (‘Behavior’ here is meant in a very broad sense, such that the movement of an inanimate object—e.g., the turning of a windmill—counts as behavior.) Since the intentional stance is best understood by contrast with the physical and the design stance, these other two stances will be discussed first.

The Physical Stance and the Design Stance

The physical stance stems from the perspective of the physical sciences. To predict the behavior of a given entity according to the physical stance, we use information about its physical constitution in conjunction with information about the laws of physics. Suppose I am holding a piece of chalk in my hand and I predict that it will fall to the floor when I release it. This prediction relies on (i) the fact that the piece of chalk has mass and weight; and (ii) the law of gravity. Predictions and explanations based on the physical stance are exceedingly common. Consider the explanations of why water freezes at 32 degrees Fahrenheit, how mountain ranges are formed, or when high tide will occur. All of these explanations proceed by way of the physical stance.

When we make a prediction from the design stance, we assume that the entity in question has been designed in a certain way, and we predict that the entity will thus behave as designed. Like physical stance predictions, design stance predictions are commonplace. When in the evening a student sets her alarm clock for 8:30 a.m., she predicts that it will behave as designed: i.e., that it will buzz at 8:30 the next morning. She does not need to know anything about the physical constitution of the alarm clock in order to make this prediction. There is no need, for example, for her to take it apart and weigh its parts and measure the tautness of various springs. Likewise, when someone steps into an elevator and pushes "7," she predicts that the elevator will take her to the seventh floor. Again, she does not need to know any details about the inner workings of the elevator in order to make this prediction.

Design stance predictions are riskier than physical stance predictions. Predictions made from the design stance rest on at least two assumptions: first, that the entity in question is designed as it is assumed to be; and second, the entity will perform as it is designed without malfunctioning. The added risk almost always proves worthwhile, however. When we are dealing with a thing that is the product of design, predictions from the design stance can be made with considerably more ease than the comparable predictions from the physical stance. If the student were to take the physical stance towards the alarm clock in an attempt to predict whether it will buzz at 8:30 a.m., she would have to know an extraordinary amount about the alarm clock’s physical construction.

This point can be illustrated even more dramatically by considering a complicated designed object, like a car or a computer. Every time you drive a car you predict that the engine will start when you turn the key, and presumably you make this prediction from the design stance—that is, you predict that the engine will start when you turn the key because that it is how the car has been designed to function. Likewise, you predict that the computer will start up when you press the "on" button because that it is how the computer has been designed to function. Think of how much you would have to know about the inner workings of cars and computers in order to make these predictions from the physical stance!

The fact that an object is designed, however, does not mean that we cannot apply the physical stance to it. We can, and in fact, we sometimes should. For example, to predict what the alarm clock will do when knocked off the nightstand onto the floor, it would be perfectly appropriate to adopt the physical stance towards it. Likewise, we would rightly adopt the physical stance towards the alarm clock to predict its behavior in the case of a design malfunction. Nonetheless, in most cases, when we are dealing with a designed object, adopting the physical stance would hardly be worth the effort. As Dennett states, "Design-stance prediction, when applicable, is a low-cost, low-risk shortcut, enabling me to finesse the tedious application of my limited knowledge of physics." (Dennett 1996)

The sorts of entities so far discussed in relation to design-stance predictions have been artifacts, but the design stance also works well when it comes to living things and their parts. For example, even without any understanding of the biology and chemistry underlying anatomy we can nonetheless predict that a heart will pump blood throughout the body of a living thing. The adoption of the design stance supports this prediction; that is what hearts are supposed to do (i.e., what nature has "designed" them to do).

The Intentional Stance

As already noted, we often gain predictive power when moving from the physical stance to the design stance. Often, we can improve our predictions yet further by adopting the intentional stance. When making predictions from this stance, we interpret the behavior of the entity in question by treating it as a rational agent whose behavior is governed by intentional states. (Intentional states are mental states such as beliefs and desires which have the property of "aboutness," that is, they are about, or directed at, objects or states of affairs in the world. See intentionality.) We can view the adoption of the intentional stance as a four-step process. (1) Decide to treat a certain object X as a rational agent. (2) Determine what beliefs X ought to have, given its place and purpose in the world. For example, if is X standing with his eyes open facing a red barn, he ought to believe something like, "There is a red barn in front of me." This suggests that we can determine at least some of the beliefs that X ought to have on the basis of its sensory apparatus and the sensory exposure that it has had. Dennett (1981) suggests the following general rule as a starting point: "attribute as beliefs all the truths relevant to the system’s interests (or desires) that the system’s experience to date has made available." (3) Using similar considerations, determine what desires X ought to have. Again, some basic rules function as starting points: "attribute desires for those things a system believes to be good for it," and ""attribute desires for those things a system believes to be best means to other ends it desires." (Dennett 1981) (4) Finally, on the assumption that X will act to satisfy some of its desires in light of its beliefs, predict what X will do.

Just as the design stance is riskier than the physical stance, the intentional stance is riskier than the design stance. (In some respects, the intentional stance is a subspecies of the design stance, one in which we view the designed object as a rational agent. Rational agents, we might say, are those designed to act rationally.) Despite the risks, however, the intentional stance provides us with useful gains of predictive power. When it comes to certain complicated artifacts and living things, in fact, the predictive success afforded to us by the intentional stance makes it practically indispensable. Dennett likes to use the example of a chess-playing computer to make this point. We can view such a machine in several different ways:

- as a physical system operating according to the laws of physics;

- as a designed mechanism consisting of parts with specific functions that interact to produce certain characteristic behavior; or

- as an intentional system acting rationally relative to a certain set of beliefs and goals

Given that our goal is to predict and explain a given entity’s behavior, we should adopt the stance that will best allow us to do so. With this in mind, it becomes clear that adopting the intentional stance is for most purposes the most efficient and powerful way (if not the only way) to predict and explain what a well designed chess-playing computer will do. There are probably hundreds of different computer programs that can be run on a PC in order to convert it into a chess player. Though the computers capable of running these programs have different physical constitutions, and though the programs themselves may be designed in very different ways, the behavior of a computer running such a program can be successfully explained if we think of it as a rational agent who knows how to play chess and who wants to checkmate its opponent’s king. When we take the intentional stance towards the chess-playing computer, we do not have to worry about the details of its physical constitution or the details of its program (i.e., its design). Rather, all we have to do is determine the best legal move that can be made given the current state of the game board. Once we treat the computer as a rational agent with beliefs about the rules and strategies of chess and the locations of the pieces on the game board, plus the desire to win, it follows that the computer will make the best move available to it.

Of course, the intentional stance will not always be useful in explaining the behavior of the chess-playing computer. If the computer suddenly started behaving in a manner inconsistent with something a reasonable chess player would do, we might have to adopt the design stance. In other words, we might have to look at the particular chess-playing algorithm implemented by the computer in order to predict what it will subsequently do. And in cases of more extreme malfunction—for example, if the computer screen were suddenly to go blank and the system were to freeze up—we would have to revert to thinking of it as a physical object to explain its behavior adequately. Usually, however, we can best predict what move the computer is going to make by adopting the intentional stance towards it. We do not come up with our prediction by considering the laws of physics or the design of the computer, but rather, by considering the reasons there are in favor of the various available moves. Making an idealized assumption of optimal rationality, we predict that the computer will do what it rationally ought to do.”